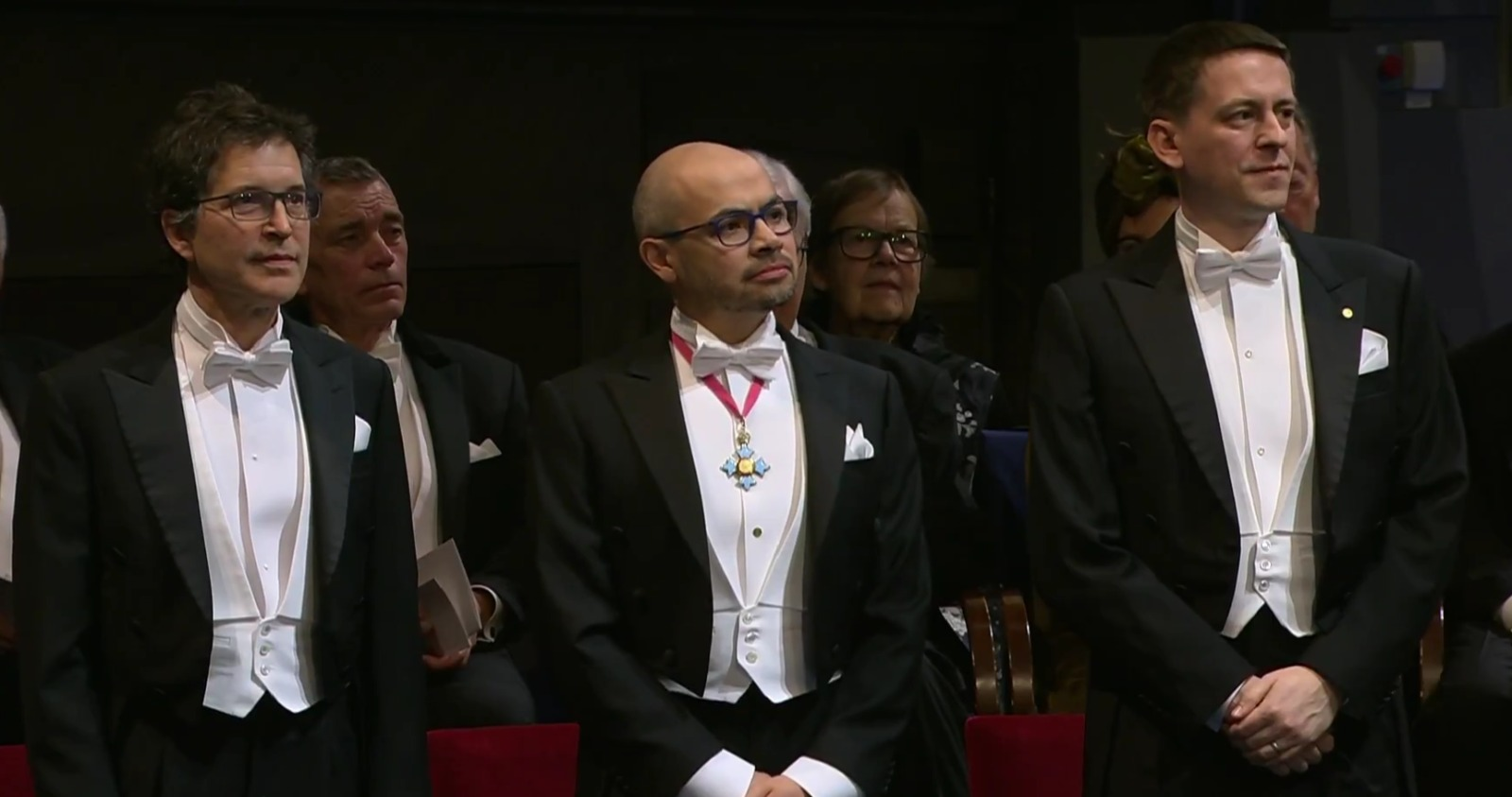

Will AI be able to cure diseases? The answer is still uncertain, but there is no doubt that people are working to make it happen. To get there, we first need to familiarize ourselves with the world of proteins and how it was revolutionized thanks to deep learning, to the point that last year the Nobel Prize in Chemistry was awarded to two groups of scientists who developed AI models for protein generation. David Baker received it for "computational protein design"; and Demis Hassabis and John Jumper for "protein structure prediction".

What is also remarkable is how quickly relevant results were achieved, exponentially impacting the field of health. Like most AI models we know and use, all of this was made possible by the development of the Transformer architecture (that's where the T in ChatGPT comes from) in 2017; its self-attention mechanism could be applied to different domains, such as text or images. And three years later, it was also applied to the domain we're interested in for this article: proteins.

Being able to design proteins with specific functions would represent a turning point in the health industry (and many others) as we know it. Having the ability to develop synthetic antibodies, vaccines in shorter timeframes, or personalized therapies for each patient are just some of the implementations that could start becoming reality.

What are proteins

We can say that proteins are the fundamental machinery of life. In a less poetic way, they are very long chains of amino acids (AA) based on small molecules that bond together. In nature there are only 20, and they make up an endless variety of proteins; the smallest ones have around 100 AA, but there can be others made up of thousands and thousands of AA.

These macromolecules, being so diverse, serve different functions throughout our body. Perhaps the most well-known are those that make up muscle tissue (hence why we talk about "consuming protein" when eating meat), but there are also those that facilitate or accelerate chemical reactions, called enzymes; or transport proteins like hemoglobin, responsible for carrying oxygen through the blood.

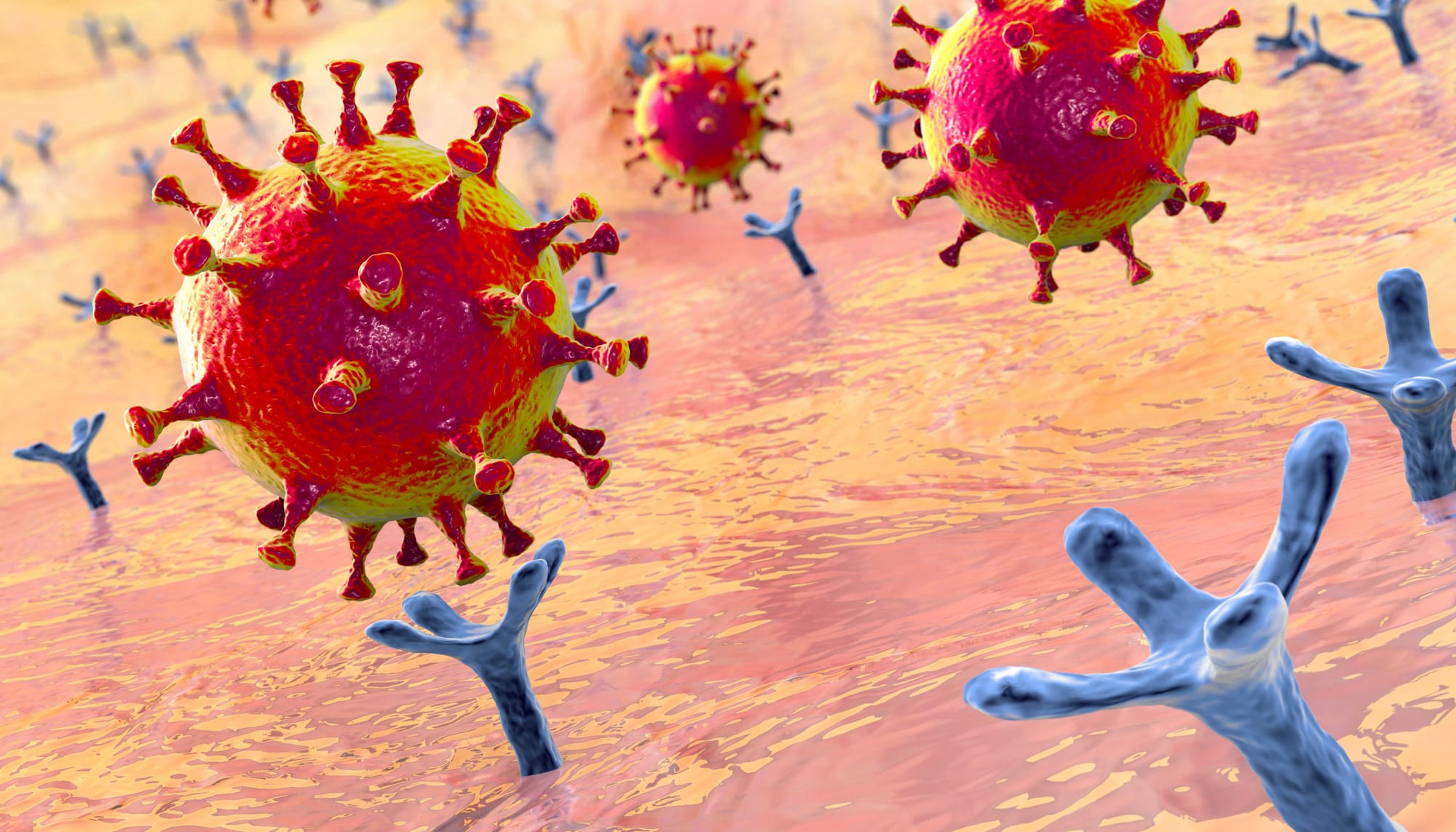

Among other roles are antibodies that form part of the immune system, signaling and regulation proteins, and membrane and recognition proteins. In this last category, during the pandemic, the Spike protein, located on the membrane of the SARS-CoV-2 virus, became very well known (war flashbacks). The Spike protein has a high affinity with the ACE2 receptor, an enzyme -- yes, another protein -- present on the membrane of cells in various organs, such as the lungs or heart. When these two molecules bind -- with other processes and more proteins involved -- the virus releases its genetic material inside the cell, causing the infection.

How proteins are studied

Knowing the structure of a protein is a key piece in understanding its function. For example, to develop a vaccine against Covid (there are different technologies, but in this case we'll focus on the "recombinant protein" approach), the Spike protein is produced in vitro, isolated from the rest of SARS-CoV-2. Knowing its structure, specific modifications are implemented to keep it in the same shape it has on the virus membrane (prefusion state, before binding to the human cell receptor). This step is important because when the vaccine is administered, the immune system responds to the Spike with antibodies that block the binding to the ACE2 receptor. These antibodies are the ones that attach to the Spike, thus learning the response, in case the virus enters the body.

The challenge is how researchers can analyze a protein that has, in this case, ~1200 AA and a length of ~15nm (that is, 0.000015 mm, minuscule). Clearly, this presented a bottleneck for biotechnological development based on these molecules, which is why the implementation of AI models came along to break this entire paradigm.

The standard method is called X-ray crystallography and requires first obtaining a protein that is stable and in considerable quantities. Then the sample has to be purified and the medium chemically modified to obtain crystals (having thousands of copies of that protein arrange themselves in a repetitive and uniform manner). This step is crucial and complicated, since not all proteins can form crystalline structures. The next step is exposing them to X-rays to generate diffraction patterns that reveal how these particles interacted with the crystal. The final stage becomes very complex, using Fourier transforms, electron density maps, and structural modeling software. The output of this entire process is finally a detailed atomic model of the protein.

AlphaFold and RFdiffusion

The two models that made waves in the scientific community were AlphaFold (developed by Demis Hassabis and John Jumper) and RoseTTAFold/RFdiffusion (led by David Baker).

In 2010, Hassabis along with Shane Legg (who is still part of the company though less frequently cited) and Mustafa Suleyman (who left the company in 2019) founded DeepMind, a company focused on artificial general intelligence (AGI) and Reinforcement Learning (RL) in the gaming domain. In 2014, Google acquired them for about 500 million dollars. And years later, in 2018, led by Jumper, they launched AlphaFold1, the first model to use deep learning applied to protein development. It applied CNNs to predict geometric parameters (distances and angles) between amino acid pairs and reconstruct the protein's structure.

While it was a milestone, it still had limitations in terms of the accuracy with which it defined atomic positions. The real breakthrough came with AlphaFold2, which based its architecture on the self-attention mechanisms of Transformers (they called this modified Transformer the Evoformer), applying them to the AA sequence, capturing structural relationships between AA that might be far apart in the sequence but, due to folding, end up being "neighbors" in their 3D structure.

Up to that point, the structure of about 120 thousand proteins was known, the result of years of research and standard techniques like the crystallography mentioned above. After the appearance of AlphaFold2, more than 200 million structures became accessible, uploaded to its own database AlphaFold DB, obviously maintained by DeepMind.

On the other hand, Baker's initial studies date back to the early 2000s with Rosetta, a program that used physical and energy-based models to predict and design proteins in silico (that is, virtually). His approach was based on classical computational algorithms, without using AI tools. During the 2010s, the team continued working and began incorporating classical machine learning such as random forests or regressions, until in 2021 they launched RoseTTAFold, which bases its architecture on AlphaFold2 but with a more computationally accessible implementation, and published as open source.

RFDiffusion appeared in 2023 and what's innovative is that it allows specifying as input that the final protein should have certain functions. At the architecture level, the model applies the generative diffusion process, which basically consists of adding noise to the input data and training the network to remove that noise so that, through RoseTTAFold and its attention mechanisms over the 3D coordinates, it obtains the "clean" structure of the protein. This model therefore not only predicts structures but allows designing proteins with specific functionalities. Like its predecessor, RFdiffusion is also open source.

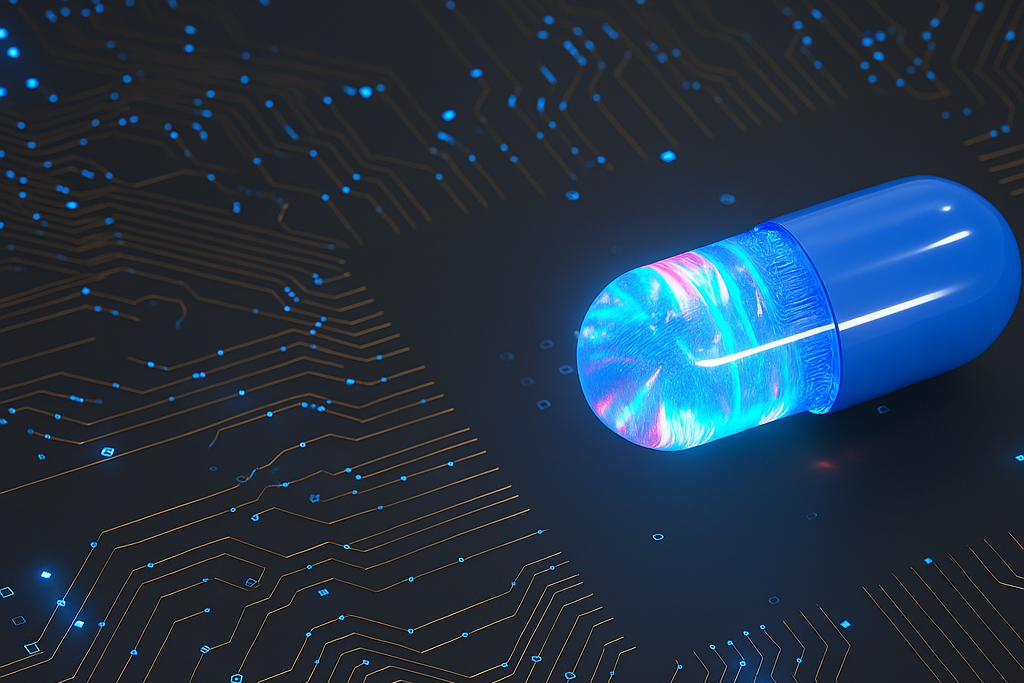

In May of last year, AlphaFold3 was published, a product of DeepMind in collaboration with Isomorphic Labs, another company also founded by Hassabis and also part of Google (actually Alphabet Inc, Google's parent company). The core of this new model is that it can predict ligand-receptor interaction, that is, how two molecules bind to each other. For example, ibuprofen (a drug or ligand) binding to cyclooxygenase (a protein that produces molecules that generate pain and inflammation).

For this, a change in the model's architecture was implemented, and in line with RFdiffusion, generative diffusion was used, which is more useful for predicting the different possible configurations of more complex multimolecular systems. It also includes other molecular structures such as RNA or antibody-antigen, although for these cases the results obtained are not as outstanding. What's promising about this tool is that it currently surpasses the standard technique, computational docking, for simulating ligand-protein interaction, since it can capture the protein's flexibility when affected by ligand incorporation.

What's next

Isomorphic Labs is one of several companies dedicated to using AI for drug design. Earlier this year, it announced plans to have a first drug developed based on AlphaFold3 by the end of 2025, with a focus on major diseases: neurodegenerative, cardiovascular, and oncological. In April, they secured USD$600 million in their first investment round, and recently, in July, in an interview with Fortune, their president, Colin Murdoch, announced they are on track to begin clinical trials in humans, in partnership with major pharmaceutical companies like Eli Lilly and Novartis.

All of this sounds quite promising and, if it works, the drug research and development processes that normally take between 5 and 10 years could be completely revolutionized, not only optimizing time and costs but also gaining access to treating diseases that are difficult to address, being able to design drugs based on countless simulations and configurations until finding the one that acts specifically and most efficiently.

We know that AI is here to stay, and the case of proteins is one of many areas where it is empowering the health sector. There are already cases where its application has optimized diagnosis, for example an early detection of lung cancer by implementing a model to analyze CT scans. In Argentina, there is even Entelai, a company founded in 2018 that is at the forefront of AI-assisted diagnostic imaging in the region.

I don't want to leave out that it is impossible to think about AI advancement without hardware and electronics development keeping pace, which is why quantum computing (with its optimization of processing capacity) is resonating more and more, with major companies like Google or Microsoft beginning to launch quantum processors.