In general, anything that's content advertising on social media is absolute garbage. But the other day I came across a sponsored tweet that was quite interesting. It was about the relationship between Large Language Models (LLM), known under the generic term "Artificial Intelligence", and the debate about "consciousness". That is, whether what the models do can be considered "thought".

In this regard, we had already made a first approach about what this implies for the philosophy of language and how it can be compared to the architectures of the great thinkers in the field. But reviewing what the sponsored tweet proposed, I realized there was a whole other body of literature on the

philosophy of mind

that we hadn't touched upon yet. And it makes sense, given that many of the key figures in the philosophy of mind are also important references in the philosophy of language. What is the mind if not a language-making machine? With that in mind, we can also ask: could a model that learns to produce language also be considered capable of thought?Languages, machines and worldsBasically, one of the contemporary problems about what the mind or the mental is, in the philosophy of mind, is whether it is possible to conceive the mind as something analogous to a "thinking machine". This idea, in its original phase, we owe to the introduction of the "

Todavía hay gente discutiendo si las LLMs tienen conciencia. Me da la sensación de que quienes creen eso nunca se tomaron el trabajo de entender cómo funciona una.

— Fede Caccia (@fedeecaccia) May 12, 2025

Lo básico: las LLMs no "entienden" nada.

Ajustan parámetros para minimizar una resta entre dos vectores… pic.twitter.com/6zFVMXXfBB

Turing machine", with which the mathematician, cryptographer and philosopher Alan Turing describes the theoretical/rudimentary functioning of what we now know as a "computer

". That triggered a lot of reflections about whether the mind and its main product, language,

But reviewing what the sponsored tweet proposed, I realized there was a whole other body of literature on the subject that challenged the very possibility of comparing them. This article is mostly dedicated to that literature.philosophy of mind are also important references in the philosophy of language. What is the mind if not a language-making machine? With that in mind, we can also ask: could a model that learns to produce language also be considered capable of thought?The contributions of Hilary PutnamThe central author we'll follow in this article is

Hilary Putnam, to whom the first formulation — or at least the most concrete one — of what we used to call machine functionalism is attributed. But additionally, Putnam has a series of articles in which he attempts to dismantle a gallery of skeptical arguments regarding The argument of Putnam is simple and in some way related to the hypothesis of the

monkeys with typewriters. The American philosopher and mathematician gives the example of a group of ants on a beach, who start walking and tracing grooves and — by chance, or rather statistics — end up forming a figure that perfectly resembles the face of A Midsummer Night's Dream

, by William Shakespeare. Can the monkeys write?What we know is that, as the product of statistical chance, both the monkeys and the ants create something that resembles a complex linguistic or visual expression. Both cases completely lack intention, meaning, and context. A large language model is like the monkeys and the ants but optimized by nearly a century of computer science, mathematics, and the semiconductor industry.From this brief moment of lucidity, I decided to talk to the GPT model again (now in its version 4 from Open AI) and ask it about semantic externalism, the functioning of language models,

sense

and reference. Everything that follows are the answers or conclusions from GPT-4 by Open AI.

Putnam and Twin EarthIn Hilary Putnam, semantic externalism is a philosophical theory that holds that the meaning of words does not depend solely on what goes on inside a speaker's head. His famous thesis: "Meanings ain't in the head"

.In 1975, the American philosopher, mathematician, and theoretical computer scientist developed a famous thought experiment called Twin Earth, which proposes imagining two identical planets: Earth and Twin Earth. In both, there are people who use the word "water," but on Twin Earth the substance is not H₂O but XYZ (identical in appearance and behavior). Even though the mental states of the speakers are identical, the word refers to different things, because the substance in the environment is different.What are the implications of this? That the mental content mental does not by itself determine the

subjective intention

of the speaker is insufficient to fix meaning without the contribution of the physical and social context.<span style="white-space: pre-wrap;">DG: Beto Galápagos</span>Saul Kripke and proper namesThe connection between Putnam and

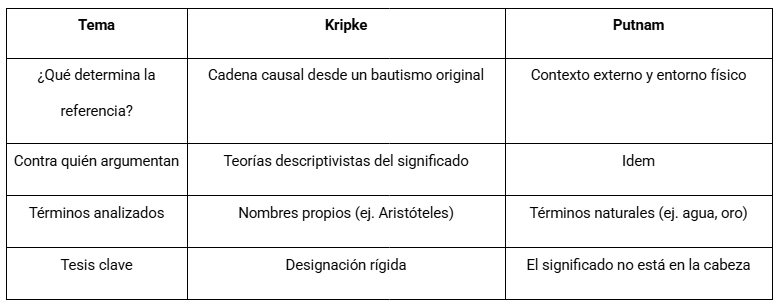

- Saul Kripke is fundamental to understanding the externalist turn in the philosophy of language of the '70s. In Naming and Necessity

- (1972), Kripke critiques the descriptivist theory of meaning (Frege/Russell), proposing that:

proper namesnamesproper names do not mean a description;instead, they are

rigid designators

that refer to the same object in all possible worlds.For example, "Aristotle" does not mean "Alexander's teacher" or "the author of the Metaphysics" but simply that guy, historically fixed in an original baptism.Putnam and natural kind termsPutnam takes up this idea of direct reference and extends it to natural kind terms

- like water,

- gold

,

tiger. According to him:we don't refer by an internal description (e.g. "colorless liquid"), but by a

that's why, even if we don't know the chemical composition of "water," the term refers to H₂O if that's the substance we've been calling "water" since the first use of the word.

Convergences between Putnam and KripkeBoth defend a causal-externalist theory

of reference, although Kripke focuses on proper names and Putnam on general or natural kind terms. The convergence is key: meaning is not in the head, but in the relationship between the speaker, the community, and the world.

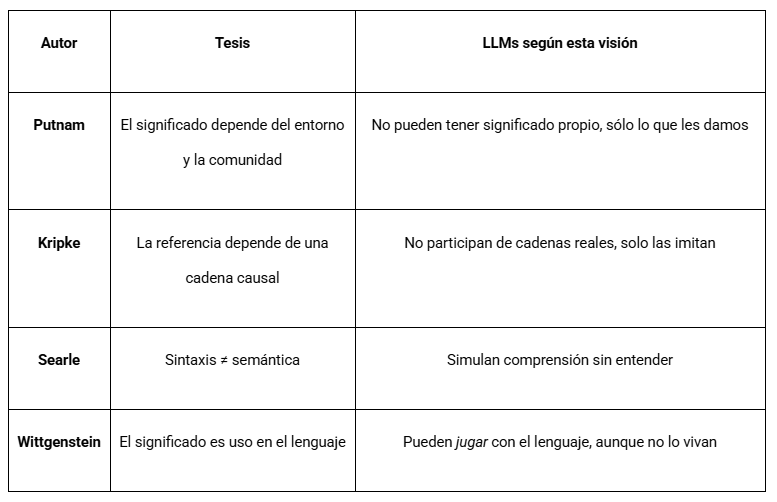

Earth 2The question of how language models (LLMs) can generate meaningful language without "knowing" what they're doing dialogues directly with Putnam's semantic externalism and with the tradition of philosophy of mind. Let's map the connections.What would Putnam say about LLMs?Putnam holds that meaning cannot be reduced to internal states (neither mental nor syntactic), but requires an external environment (chemical, social, historical), a causal connection with things in the world, and a linguistic community that gives sense and reference to words. An LLM has no access to the world nor does it participate in an intentional community. Therefore, according to classical externalism,

it lacks

genuine intentionality: it doesn't refer, it doesn't mean in the strong sense. It only simulates meaning by generating coherent sequences based on statistics and patterns.Analogy with Twin EarthThe LLM would be like a speaker on Twin Earth who says "water" without having interacted with H₂O or XYZ. It operates in a linguistic bubble where it handles signs without referents: it

doesn't know

(and can't know) what it refers to. This reveals the key point: LLMs can produce language that is meaningful for us, but not for them

.

Kripke and the lack of real referenceFor Kripke, referring requires a historical causal chain. An LLM does not participate in that chain. If it says "Napoleon," it does so through statistical patterns, not because it's connected to a naming event. Its language is a parasite of ours.So, do LLMs understand nothing?

It depends on what we mean by "understand." From strong semantic externalism: no, they understand nothing. But from a more functionalist or pragmatic perspective: they understand enough to operate in a communicative environment with humans. In this sense, we could say that an LLM "acts as if it understands", but what it produces only has meaning because we interpret it, anchored in our causal connections with the world.

. But we humans interpret them from

our world, giving them our meaning. What for them is structured noise, for us is language.<span style="white-space: pre-wrap;">DG: Beto Galápagos</span>Frege: sense and reference

- In this context, we cannot avoid mentioning

- Gottlob Frege

- , the German mathematician and philosopher, father of modern logic and one of the central pillars of analytic philosophy. In his classic essay

- "On Sense and Reference" (1892), Frege established a distinction that remains essential in the philosophy of language: the difference between sense

(

Sinn) and reference (Bedeutung).The

reference of an expression is the object in the world it designates. For example, "the morning star" and "the evening star" both refer to the same object: the planet Venus. But the sense of both expressions is different: the first connotes something you see at dawn, the second something you see at dusk. Thus, two expressions can have the same reference but different senses.

This Fregean distinction is key for our analysis because it introduces the possibility that language operates on two levels: one informational and one experiential. An LLM can reproduce

references correctly (associating "Venus" with relevant data about the planet), but does it have sense? In other words, does the model understand something when it generates a sentence, or does it only process signs without grasping their mode of presentation?

- Sense in Frege and its absence in LLMsFrom a Fregean perspective, the sense of an expression is the mode of presentation of the referent. When we say "the morning star," we are not only designating Venus but also presenting it in a specific way, loaded with context, perspective, and situation. The sense is what gives the expression its

- cognitive value, what makes it informative.An LLM operates only at the level of

- statistical associations between tokens. It can reproduce the expression "the morning star" and associate it with Venus, but it has no experience of dawn, no visual context, no temporal perception. For the model, there is no "mode of presentation": it only applies statistical rules to generate what sounds appropriate. "Meaning" only occurs

when someone reads and interprets it.Wittgenstein: meaning as useLudwig Josef Johann Wittgenstein takes another path. The Austrian philosopher, mathematician, and linguist does not seek an essence of meaning (like reference in Frege, or causal connection in Putnam). Instead, he asks how we use language in everyday life. He emphasizes, in fact, that

"the meaning of a word is its use in the language".There's no "internal translation" magic from language to thought.What matters is practice, the language game.Language is understood within a social fabric, full of rules, habits, contexts.Applied to LLMs, even though an LLM has no intentions or consciousness, it does participate in language games in the pragmatic sense, generating texts that can be used, responded to, refuted, incorporated into human practices. In this way, it generates functional meaning, although not intentional.

focus on

reference

- and semantic realism;

- Searle focuses on

- intentionality and consciousness; and Wittgenstein focuses on everyday use and form of life

.Ethics requires intentional agency

In most ethical traditions (Kantian, utilitarian, Aristotelian), the starting point is that:an agent understands what it does

,it can deliberate

about good or evil,and it has a purpose

or a will.LLMs meet none of these criteria: they have no consciousness, semantic understanding, self-interest, or ability to justify their actions. They are instruments

shifts

. There are at least three levels:• Developer/programmer responsibility (what data was used, what limitations the model has, how bias, toxicity, and filtering are configured)• User responsibility (how it's employed, whether it's used to manipulate, impersonate, or spread misinformation)

•

Responsibility of the

- ecosystem

- (what norms are built around these technologies, what degree of

- autonomy they are allowed in critical environments)

<span style="white-space: pre-wrap;">DG: Beto Galápagos</span>What if the model becomes autonomous?If we imagine a future where a system learns long-term, interacts with the real world, forms emergent intentions (simulated or not) and integrates socially, then some philosophers (like Floridi or Gunkel) argue that