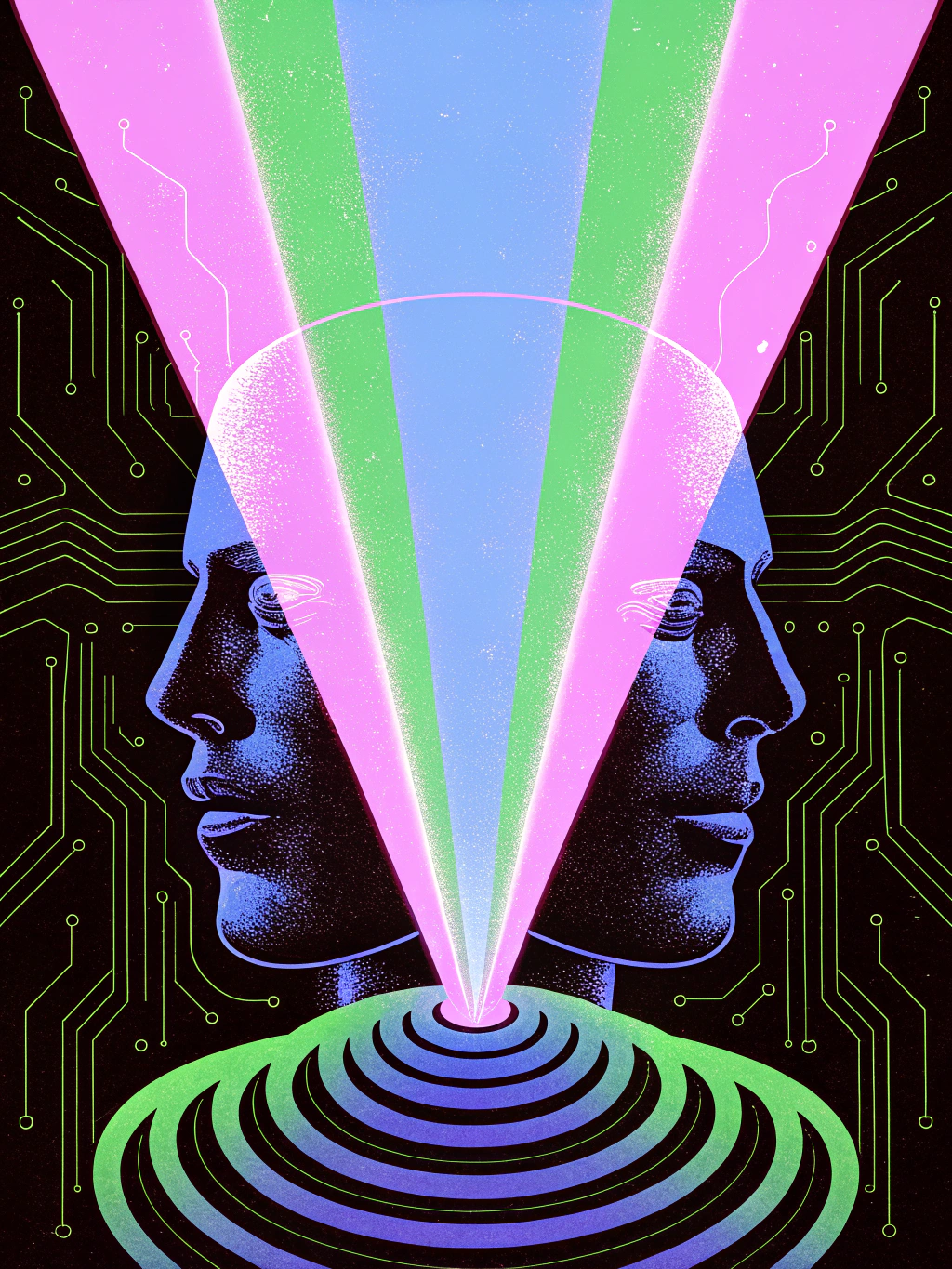

You didn't choose what you read today. An algorithm did.

Before you opened this article, a series of recommendation engines had already decided what appeared on your feed, which emails landed in your inbox, what news stories you saw, and which ads followed you across the web. You think you're browsing the internet. In reality, the internet is browsing you.

This isn't hyperbole. It's engineering. And understanding how it works is the first step toward something increasingly rare: thinking for yourself.

What Algorithms Actually Do

Let's strip away the mystique. An algorithm is a set of instructions that takes inputs, processes them, and produces outputs. When people talk about "the algorithm," they usually mean recommendation systems: the software that decides what content you see on social media, search engines, streaming platforms, and e-commerce sites.

These systems operate on a simple principle: predict what you'll engage with, then show you more of it. Engagement means clicks, likes, shares, comments, watch time — any measurable interaction. The goal isn't to inform you, entertain you, or make you smarter. The goal is to keep you on the platform as long as possible, because your attention is what's being sold to advertisers.

The inputs these systems use are staggering in scope:

- Your behavior: Every click, pause, scroll, like, share, and even the content you hovered over but didn't click.

- Your profile: Age, location, gender, language, device type, operating system.

- Your social graph: Who you follow, who follows you, who your friends interact with.

- Your purchase history: What you buy, what you almost bought, what you browsed.

- Millions of other users' data: The system finds people similar to you and assumes you'll like what they liked.

From these inputs, the algorithm builds a model of you — a digital shadow that knows your preferences, your vulnerabilities, your attention patterns, and your emotional triggers. Often better than you know them yourself.

The Filter Bubble: Your Personalized Reality

In 2011, Eli Pariser coined the term "filter bubble" to describe what happens when algorithms curate your information diet: you end up in a self-reinforcing loop where you only see content that confirms your existing beliefs.

The mechanism is straightforward. You click on an article about, say, the dangers of artificial intelligence. The algorithm notes this and shows you more skeptical-of-AI content. You click again — it was interesting, after all. Now the algorithm is confident: you're an AI skeptic. Your feed fills with AI doom content. Meanwhile, the nuanced articles about AI's potential benefits never reach you. Not because they don't exist, but because the algorithm calculated you wouldn't click on them.

Over time, your worldview narrows without you noticing. You assume "everyone" shares your perspective because everyone in your feed does. The algorithm didn't change your mind — it built walls around it.

This isn't theoretical. A 2023 study by the Mozilla Foundation found that YouTube's recommendation algorithm consistently pushed users toward increasingly extreme content, regardless of their starting point. Start watching a moderate political commentary, and within 10 recommendations, you're watching fringe content. The algorithm doesn't have an ideology — it has a metric: watch time. And extreme content, it turns out, is very good at keeping people watching.

The Attention Economy: You Are the Product

The business model is elegantly brutal. Platforms offer free services — search, social networking, video, email — and monetize the attention those services capture. Your attention is sold in millisecond auctions to advertisers who bid for the right to show you things.

This creates a perverse incentive structure:

1. Content that provokes emotion gets more engagement than content that informs. Outrage, fear, and anxiety are more "engaging" than nuance.

2. Platforms that maximize engagement win, so they optimize for emotional manipulation, not truth.

3. Creators who understand this game the system, producing increasingly sensationalized content because that's what gets rewarded.

4. Users who consume this content develop distorted worldviews, believing the world is more dangerous, divided, and chaotic than it actually is.

Tristan Harris, a former Google design ethicist, described this as a "race to the bottom of the brain stem." Platforms compete to trigger your most primitive responses — fight, flight, tribal allegiance — because those responses generate the most measurable engagement.

How Algorithms Exploit Cognitive Biases

Algorithms don't just show you content. They exploit the predictable flaws in human reasoning — cognitive biases — to maximize engagement.

Confirmation bias: The algorithm feeds you more of what you already believe, making your beliefs feel more justified than they are.

Availability heuristic: When your feed is full of a particular topic, you assume it's more important or more common than it actually is. Crime stories in your feed? The world feels dangerous. Success stories? Everyone's thriving except you.

Social proof: When the algorithm shows you that a post has 50,000 likes, you're more likely to engage with it. The platform displays engagement metrics precisely because they influence your behavior.

Loss aversion: "You have 5 unread notifications." "Your streak is about to end." "This offer expires in 2 hours." Every notification is designed to exploit your fear of missing out.

Variable reward schedules: Like a slot machine, your feed delivers rewards — interesting content, likes on your posts, new followers — at unpredictable intervals. This randomness is psychologically addictive. You keep scrolling because the next great post might be just below.

This isn't speculation. Internal documents from Facebook (leaked by Frances Haugen in 2021) revealed that the company knew its algorithms amplified hate speech, misinformation, and content harmful to teenagers' mental health — and chose not to fix it because engagement metrics would suffer.

The Enshittification Cycle

Cory Doctorow calls this process enshittification: platforms start by being useful to users, then shift to being useful to advertisers, then become useful only to themselves. The algorithm is the engine of this decay.

In phase one, the algorithm shows you what you want. In phase two, it shows you what advertisers want you to see, mixed with enough of what you want to keep you from leaving. In phase three, it shows you whatever maximizes revenue, regardless of your interests or wellbeing.

We're deep in phase three. And the cost isn't just wasted time or annoying ads. The cost is cognitive: the algorithms are shaping how millions of people think, believe, and understand reality.

What You Can Do

You can't opt out of algorithms entirely — they're embedded in every digital service you use. But you can reduce their influence and reclaim some mental autonomy.

1. Recognize the manipulation

The most powerful defense is awareness. When you feel a strong emotional reaction to content — outrage, fear, righteous anger — pause. That reaction is exactly what the algorithm wanted. Ask yourself: is this genuinely important, or am I being manipulated into engagement?

2. Curate deliberately

Don't let algorithms decide your information diet. Subscribe to RSS feeds. Visit websites directly instead of relying on social media to surface their content. Read books. Listen to podcasts you chose, not ones that were "recommended for you." Choose your sources; don't let a machine choose them for you.

3. Use tools that respect you

Switch to browsers and search engines that don't track you (Firefox + DuckDuckGo). Use ad blockers (uBlock Origin). Consider operating systems that don't spy on you. Every tracking pixel you block is one less data point feeding the algorithm.

4. Slow down

The algorithm is optimized for speed — quick scrolls, instant reactions, rapid consumption. The antidote is slowness. Read long articles. Watch full documentaries. Have conversations that last more than 280 characters. Depth is the enemy of algorithmic manipulation.

5. Seek disagreement

Deliberately expose yourself to perspectives you don't share. Not to argue, but to understand. If your feed is an echo chamber, break out of it. Follow people who challenge your assumptions. Read publications outside your ideological comfort zone.

6. Go offline

The simplest and most radical act: spend time away from screens. Walk without a podcast. Eat without scrolling. Think without Googling. The algorithm can't shape your thinking if you're not feeding it data.

The Bigger Picture

Algorithms aren't inherently evil. They're tools, and like all tools, they serve whoever controls them. The problem isn't that algorithms exist — it's that they're controlled by companies whose incentive is to capture attention, not to foster understanding.

Reclaiming your thinking in the age of algorithms isn't about going off the grid or smashing your phone. It's about intentionality: choosing what you consume, questioning what you see, and understanding the systems that are trying to think for you.

The ancient Stoics had a concept for this: the hegemonikon — the ruling faculty of the mind. The part of you that judges, decides, and gives meaning to experience. Algorithms can flood your senses with noise, but they can't override the hegemonikon. Not unless you let them.

The question isn't whether algorithms will continue to shape human cognition — they will. The question is whether you'll be aware of it. And awareness, in a world designed to distract, is the beginning of freedom.