Have you ever stopped to think about what a computer is? I'm not talking about the collection of hardware components that we commonly refer to as a PC. I'm pointing to the very concept of computing as the ability to process an input to produce an output following a set of programmable instructions. I'm asking what characteristics a machine should have to be considered a computer.

Surely, when we try to get close to a definition, the word electronic or more precisely the idea of electric circuits transmitting information will quickly come to mind, but could there be computers made of something radically different?

In this article, I propose that we dive into the universe of non-electronic computers: from Lego models and sequences of water jets to the use of real neurons to reproduce artificial intelligence algorithms more efficiently and why not? Play Doom.

All you need is logic gates

The first question that arises is: why is electronics so special?

As a chemist, the first time I heard about Silicon Valley, I imagined a vast valley with an abundance of silicon minerals. In less nerdy terms: a place with lots of sand. It turns out that while there are indeed beaches in Silicon Valley, the reference to silicon has very different origins: this element is the main input for the fundamental technological component of the computing era: the transistor.

These little three-legged gadgets revolutionized electronics by opening up the possibility of including logical operations within electric circuits, known as logic gates.

When we try to get close to a definition, the word electronic or more precisely the idea of electric circuits transmitting information will quickly come to mind, but could there be computers made of something radically different?

While their direct ancestors, vacuum tubes, already performed exactly the same function and were part of the first computers, the transistor allowed for more precise control and the miniaturization of circuits that previously occupied entire rooms.

The transistor (technically the gate in a set of transistors, but let's continue) takes two input currents and, according to its function, produces an output current.

In a computer, the input and output currents are of the ON/OFF type, current or no current, meaning binary information: zeros and ones.

The best way to understand the idea of logic gates is through examples. Let's look at the most intuitive ones:

An AND logic gate will produce 1 only if both of its inputs are 1.

An OR gate will produce 1 if any of its two inputs is 1.

Very nice, but so far none of this sounds very spectacular.

The interesting thing is that it has been proven that any algorithm, from adding two numbers on a calculator to ChatGPT, can be broken down into a series of fundamental logical operations like these. So, any other device capable of recreating logical gates could potentially be a computer.

In this example (above), a daring individual with possible socialization issues designed AND, OR, and NOT logical gates using Lego blocks. In this other example (below), a gadget that operates with jets of water allows for the recreation of logical gates in liquid circuits.

The only reason these engineering masterpieces can't be combined to form actual computers is the impossibility of mass and miniaturized production, but the foundation is there. Transistors can be created at ridiculously small scales. In this video, the size of a transistor is compared to various objects: today's transistors are smaller than cells! Move aside, Lego enthusiast.

Moore's Law

If transistors are so great, why would anyone want to replace them?

According to Moore's Law, which is purely empirical and has no theoretical basis, the number of transistors that can fit in a microprocessor doubles every two years. For this to be possible, transistors need to keep getting smaller, but we are reaching a limit.

Moore's Law held true until the mid-2010s when problems began to arise in continuing to reduce transistor sizes.

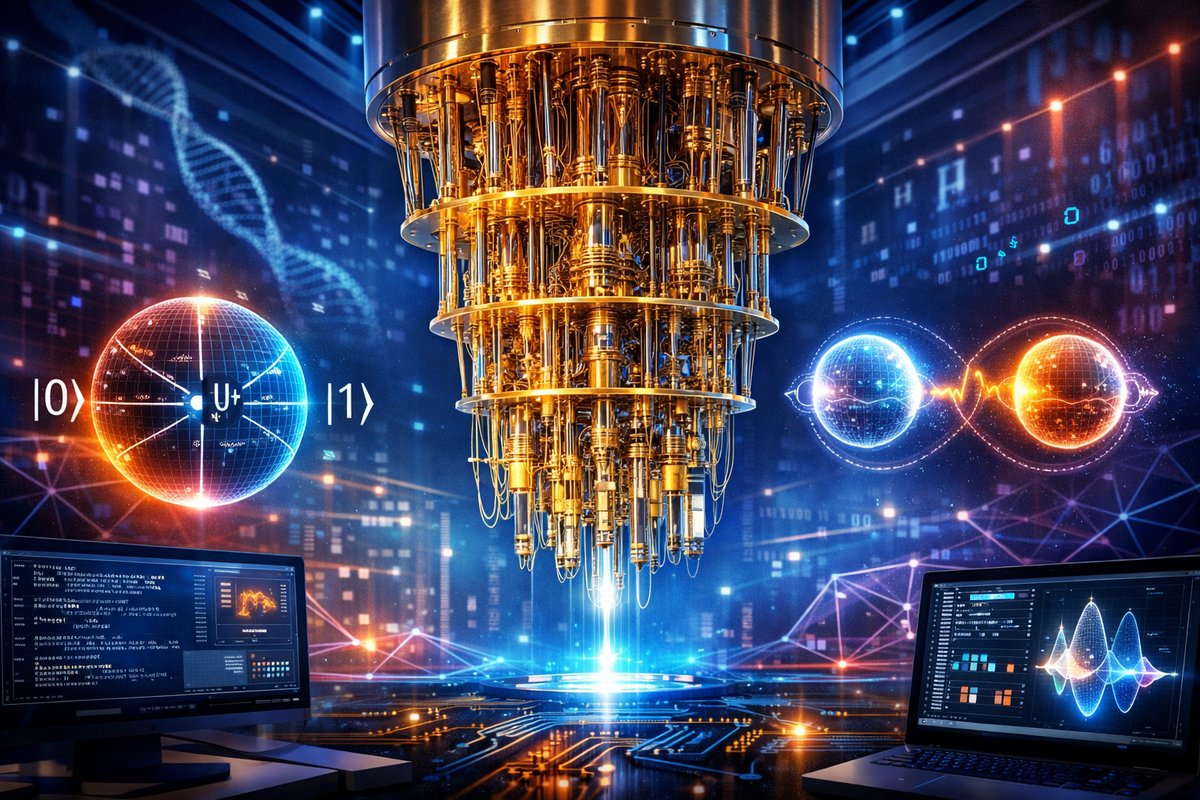

As we mentioned in a previous article, as we approach atomic scale, the physical laws as we know them change radically, and we enter the realm of quantum physics. The behavior of materials at this scale becomes probabilistic: electric currents could spontaneously teleport from one transistor to another, a phenomenon known as tunneling, losing all possibility of control over electronics.

Advancements in artificial intelligence require large computing centers that generate immense heat. It is estimated that data centers around the world consume more electricity today than entire developed countries, and the future outlook is even worse.

On the other hand, the amount of heat emitted by each transistor means that any more compact design carries the risk of reaching uncontrollable temperatures.

This point is particularly important, given that advancements in artificial intelligence require large computing centers that generate immense heat. It is estimated that data centers around the world consume more electricity today than entire developed countries, and the future outlook is even worse.

This is why alternatives to transistor-based computing began to be considered. One example of this is quantum computing, where logical operations are performed by Q-bits that can be atoms, semiconductor materials, or even light. Since we've already covered that topic, I want to mention other types of computing that are less flashy but no less interesting.

Optical computing

To understand the importance of optical computing, the first thing to highlight is that behind all the smoke of AI, there are just accounts, additions, and multiplications, multiplications and additions. In particular, the vast majority of the computational (and energy) cost of the algorithms based on neural networks that AI relies on is consumed in multiplying matrices.

This is why there has been an explosive development of GPU computing, that is, on graphics cards, since they have an architecture particularly efficient for these calculations. Bad news for gamers, who have seen the prices of graphics cards skyrocket in recent years.

How can light help with this problem?

While it's possible to conceive of light-based computing at the level of logic gates, as we saw in the first part, this approach focuses on delegating to light only the most computationally expensive part of the calculation: matrix multiplication.

The idea is as follows: instead of having the computer perform the multiplication directly, an optical system is designed whose physical behavior encodes the result. This way, it’s enough to measure the intensity of a light pattern to obtain the value of the operation. What's interesting about this approach is that it allows us to transition from a problem that, in a traditional computer, scales as N3, to one whose effective complexity scales as N2, where N is the size of the matrix. This translates to a staggering savings in computational time when N is very large.

Biological computing

Although computing with atoms or light already sounded like science fiction, we have yet to reach the most mind-bending segment of all: computing with real neurons.

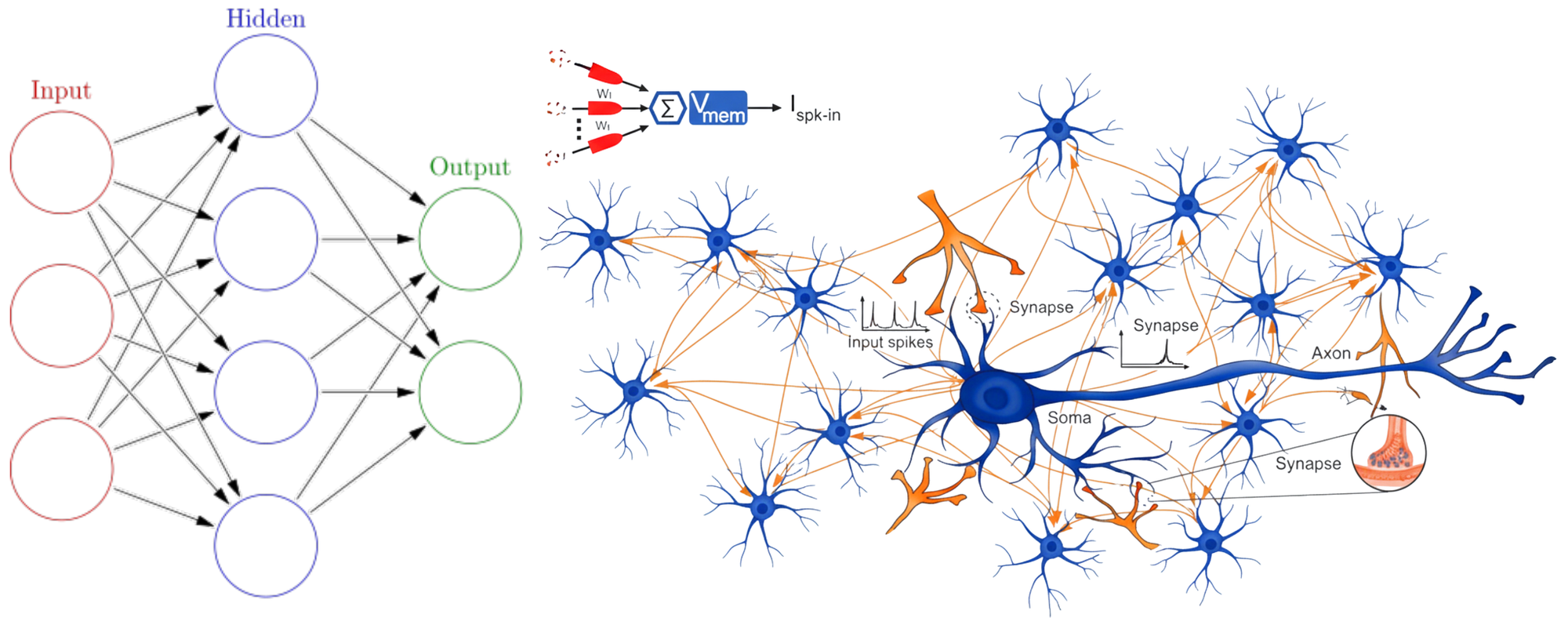

If artificial intelligence algorithms are based on neural networks, which in turn are inspired by the connections between neurons in the brain, it’s not far-fetched to think of computing architectures specifically designed for these tasks. This is known as neuromorphic computing.

The most common schemes are based on artificial components that mimic the behavior of neurons: they connect through electronic links and fire if they receive an input signal that exceeds a certain threshold. However, recent advancements use real neurons directly to power AI algorithms.

Beyond curiosity, there’s a very concrete reason to consider biological computing systems: the energy efficiency of living organisms far surpasses that of any computer. Just as there is software and hardware, the biological elements responsible for part of a computational calculation are referred to as wetware or biomimetic hardware.

There’s a very concrete reason to consider biological computing systems: the energy efficiency of living organisms far surpasses that of any computer.

At Cortical Labs, researchers created a cyborg that combines an electronic chip (the classic part of the computer) with a tissue of neurons that can "adapt" to perform a specific function. The system is then exposed to a task, such as playing a game, and receives a positive reward for each successful attempt. In this process, the tissue self-organizes in such a way as to maximize the reward: a living analogy to training a neural network.

Can it run Doom?

In the original project, the Frankenstein chip was designed to play Pong, but even though the invention is out of this world, if they really wanted to impress the public, they had to aim higher.

Doom is a game from 1993 that has garnered fans across several generations of gamers. After its initial success, it became a cult classic famous for its ability to be installed on practically any electronic device. This gave rise to the project Can it run Doom. From calculators to pregnancy tests, geeks around the world have devoted ridiculous amounts of time to running the game on the most improbable devices. Beyond the formal definition of a computer, it’s clear that any device worthy of being considered one must at least be capable of running Doom. If the cyborg from Cortical Labs wanted to dive headfirst into the Geek world, it had to pass the ultimate test… and it did.

The magnitude of the achievement in this case is twofold, because Doom is not only installed on this computer, but the neurons learned to play it by themselves and are out there in cyberspace annihilating intergalactic bugs. Time to aim for the corner.

A fly trapped in a virtual reality

To conclude this journey through the latest advances in biological-computer integration, here’s a case that operates in the opposite direction.

The neuromorphic chip that plays Doom uses real neurons to enhance the efficiency of a computational algorithm. Could there then exist a computer that fully emulates the brain of a living being?

Recently, scientists from Eon Systems mapped the entire brain of a fly in a neural connection simulator, and the result was remarkable. The computer created a virtual environment that emulated terrain where the fly could move and search for food. The outcome was that the virtual fly behaved exactly like a real fly within this simulated reality.

This experiment demonstrates that it’s possible to transfer all the information stored in a brain to a virtual reality. Black Mirror seems tame in comparison.

Of course, the brain of a fly is infinitely less complex than that of a human, but the groundwork for advancing the development of biological-virtual interfaces is already laid. Both from the perspective of incorporating living tissues into electronic circuits to enhance their efficiency and from the mapping of entire brains in software, the cell-machine integration is advancing by leaps and bounds. Are you ready to enter the Matrix?

Enjoyed the read? The Wizards are who keep 421 alive. Join and get the digital magazine, exclusive content, and more.